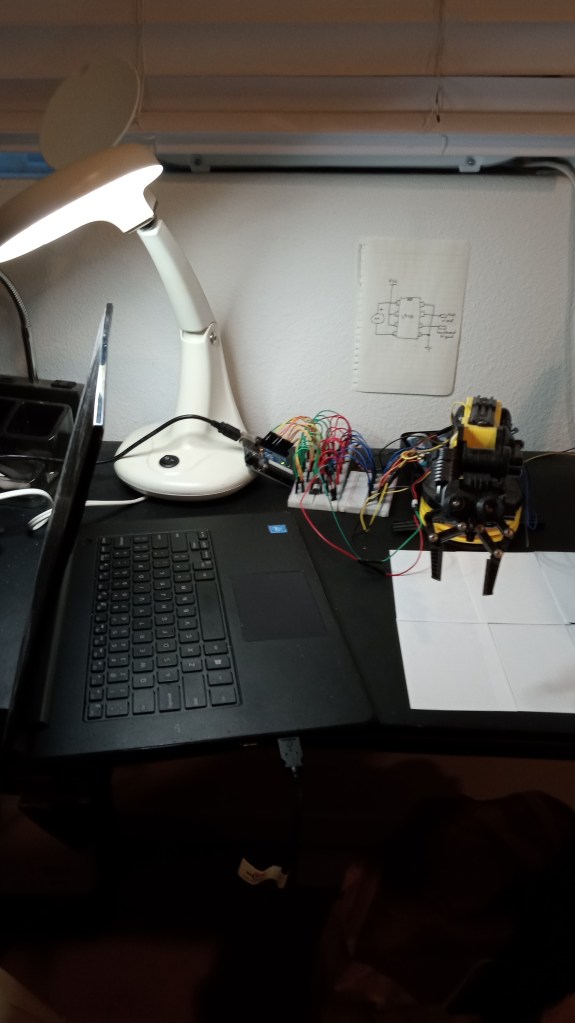

This is the setup that I used to control a robot arm over the internet. Starting on the right, there is the robot arm, an OWI-535 kit which I assembled last August. Moving to the left, there is interface circuitry composed of L9110 motor controllers for the five low-voltage DC motors on the robot arm. The motor controllers in turn are driven by five-volt high/low input signals from an Arduino. The Arduino is in turn driven by serial commands from an old laptop that I had in storage.

Here’s the robot arm:

Here’s the interface circuitry of the breadboard with the motor controllers connected between the arm and an Arduino:

The color coding of the jumpers proved very useful. Red is the power voltage for the robot arm motors, green is ground, blue and black send current through the motors, and yellow and orange are inputs from the Arduino. Note that the wiring that came with the robot kit follows a different color scheme.

Anyhow, the reason I dwell on the color coding of the jumpers is because I originally tested the system with just one motor controller connected to one motor, and it was a confusing jumble because I just randomly attached jumpers to Get ‘Er Done. Then I looked at the mess and wondered, “How am I going to do this for five motor controllers without becoming totally confused?” But then I used color coding, and I had only a couple wiring issues, one of them being the classic bungle of forgetting to tie the grounds together.

BTW, you may notice the pin diagram of the 9110 on the wall behind the setup. The chips are turned the other way from the diagram, but if you allow for that, you’ll see how the jumpers match.

So how does this communicate over the internet? Well, the ‘local’ computer shown in this layout is connected to the wi-fi in my apartment. The computer is running a software program that I wrote in Processing (with a little help from Chat-GPT) which displays the webcam view and has a simple user interface for controlling the robot. But what of the ‘Magical Part’ — where the system is controlled over the internet?

I toyed with some ideas about how to accomplish that, including using Zoom, a white cardboard screen, a black-tipped wand, and photoresistors placed against the local laptop screen. In the end, I decided to ask Chat-GPT if there was a way to control one computer with another over the internet. And so it informed me of . . . .

Google Chrome has an extension called Chrome Remote Desktop, which (just as I had requested from Chat) allows one computer to control another one over the internet. Chrome Remote Desktop was created so that office workers could stay at home and remotely access their office computers from their home computer.

It’s pretty simple to use. You load the software onto both computers, then you sit at home and access your office computer. Your home computer will show the screen of your office computer, and the office computer will respond to commands that you input with mouse and keyboard on your home computer.

Google Chrome Remote Desktop requires a Gmail account to tie the two computers together, and otherwise has no special requirements. You can obtain it for free on the Google Chrome Store.

Happy to say that Remote Desktop works like a charm. I loaded it on my local and remote PC laptops, then took the remote laptop to the nearby library, about half a kilometer away. I logged into Remote Desktop and sure enough, the remote PC screen reproduced the local PC screen, with an image of the robot arm and the user interface. With no significant problems, the arm responded to my keyboard inputs (albeit with some lag — more on that later).

Here is the YouTube video link:

The user interface is very simple. You select which motor to use by pressing the left and right arrow keys on the keyboard. The schematic beneath the camera view shows which segment motor has been selected.

You then press the up or down arrow key to operate the selected motor. The up arrow will cause the base to turn counterclockwise, whereas the shoulder, elbow, and wrist motors will elevate the selected segment. The up arrow key will cause the claw to open, as in “Open Up.” The down arrow key causes the selected motor to run in the opposite direction, with the claw-convention being, “Chomp Down.” (I thought this was the most intuitive thing to do.)

I wanted to avoid requiring the user to constantly shift gaze between the camera and the user interface. Thus the schematic is placed directly below the camera image in the same configuration as the arm, and is brightly color coded. This is so you can keep your eyes on the camera view while still being able to see the user interface schematic at the bottom of your field of vision.

Prior to this user-input design, I had briefly considered using a mouse and on-screen buttons for selecting and running the motors, but again, that would require the user taking eyes off the camera view. So I went with the arrow keys instead.

In summary: you can operate the robot by keeping your eyes on the camera view and resting your hand on the arrow keys, allowing you to concentrate on robot arm operations instead of engaging with the interface.

So where do we go from here?

I’d like to cut down on lag. From what I’ve been able to tell so far, the lag is primarily due to the limitations of my ‘local’ computer, which is old and not very powerful. The operation is lagless when I’m operating the robot from the local computer directly, but apparently the local computer’s CPU can’t handle the burden of both operating the program and running under Remote Desktop. Anyhow, there certainly is room for improvement in the lag department.

I want to upgrade to bigger and more versatile robot systems. As you can see, half the user interface screen is unused, and that offers opportunity. For example, I could tie in more cameras and sensor data, maybe even operate multiple robots with the same interface. I’d like to experiment with a game pad controller at some point.

I’m looking into commercial applications. Space and military applications are of course already being handled by people with a lot more budget and knowledge than I have and so I wouldn’t be able to compete there. So as to where I do fit in, I’m interested in more ‘everyday’ applications. Perhaps some landscaping, janitorial, warehouse, and factory jobs could be done remotely. In a step up from that, laboratory technicians could more safely do their analyses remotely, handling test tubes, petri dishes, and other equipment with robot grippers.

I welcome application suggestions. Especially if you run a small business and have an idea of how ‘interbotics’ could be of use to you.

Pingback: Links 25/02/2023: ScummVM’s Google Money and GNOME 44’s Background App | Techrights

Pingback: Controlling a robot arm over the internet – Kwik Gadgets

Pingback: Controlling a robotic arm over the web - EBAYREMOVALS